External Meeting Control & Command Patterns

Send audio, chat messages, images, and playback controls into a live meeting through Meetstream’s control WebSocket channel. Works with Google Meet, Zoom, and Microsoft Teams.

Overview

When you create a bot with the socket_connection_url configuration, Meetstream opens a WebSocket connection from the bot to your server. You send JSON commands over this connection to control what the bot does inside the meeting.

Enabling the Control Channel

Include socket_connection_url in your Create Bot API request:

The websocket_url is a WebSocket endpoint you host. Meetstream connects to it as a client.

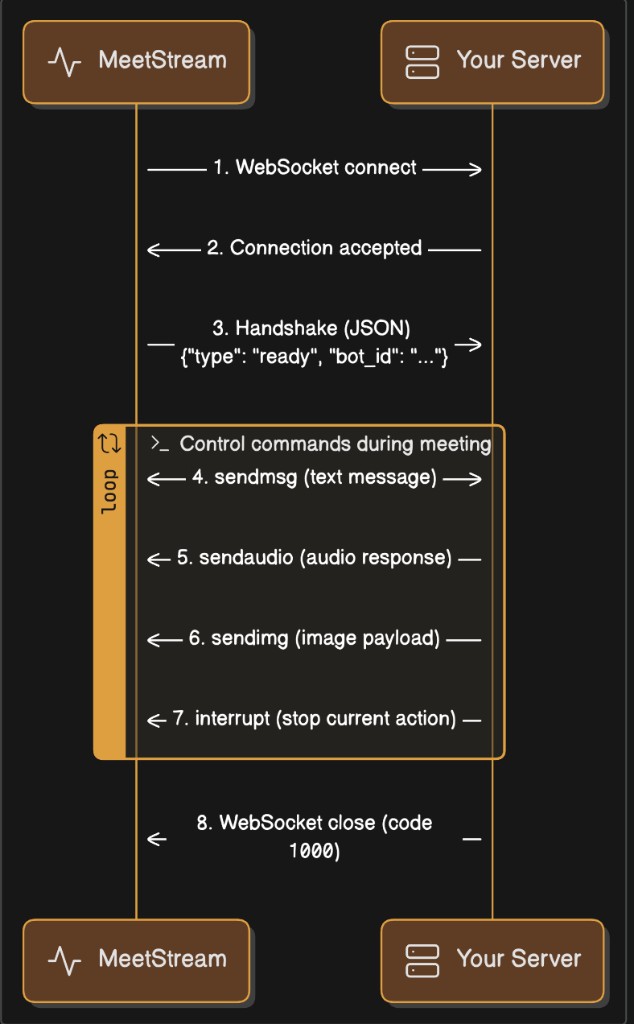

Connection Lifecycle

1. Bot connects to your WebSocket endpoint

The bot initiates the connection when it joins the meeting.

2. Bot sends a JSON text handshake

After you receive this, the bot is ready to accept commands.

3. You send JSON commands

All commands are JSON text WebSocket frames. The bot executes them in the meeting.

4. Connection closes when the bot leaves

The WebSocket closes with a normal 1000 close code when the bot exits.

Timeline

Command Reference

All commands share this structure:

sendaudio — Play Audio in the Meeting

Plays audio through the bot’s virtual microphone so all meeting participants hear it. Use this for text-to-speech output, pre-recorded audio prompts, or any audio your application generates.

Audio Encoding Requirements

The audiochunk field must be a base64-encoded string of raw PCM16 signed little-endian audio bytes. No WAV headers, no MP3 — just raw samples.

Encoding Audio — Python

Encoding Audio — JavaScript / Node.js

Sending a WAV File — Python

Chunked Audio Streaming

For long audio (TTS streams, file playback), send audio in chunks rather than one large command. A good chunk size is 0.5–2 seconds:

sendmsg — Send a Chat Message

Posts a text message in the meeting’s chat panel, visible to all participants.

Why both

messageandmsg? Different platforms read different fields internally. Always include both with the same value for cross-platform compatibility.

Example — Python

Example — JavaScript

sendchat — Send a Chat Message with Role and Streaming

An extended chat command that supports agent/user role tagging and incremental streaming.

Streaming Pattern

Send partial messages as they are generated, then a final complete message:

interrupt — Stop Audio Playback

Immediately stops any audio currently playing through the bot’s speaker and clears the audio playback queue. Use this for barge-in (when a human starts speaking while the bot is talking) or to cancel a response.

Platform Support

Example — Barge-In Pattern

sendimg — Set Bot Video Frame (Base64)

Sets the bot’s camera feed to a static image. The image is displayed as the bot’s video in the meeting.

Example — Python

sendimg_url — Set Bot Video Frame (URL)

Same as sendimg but provides a URL instead of inline base64. The bot downloads the image and sets it as its video frame.

Full Server Examples

Python Server (FastAPI)

A complete server that accepts both the audio and control channels, logs audio, and sends a welcome message.

Run:

Node.js Server (ws)

Using Both Channels Together

When you enable both live_audio_required and socket_connection_url, the bot opens two independent WebSocket connections to your server. A typical Create Bot request: